Data Quality and the NBB_2017_27 Circular

October 13, 2018 / in Basel III, Data Quality, Data Quality Framework, NBB, NBB_2017_27, Regulatory, SAS, Solvency II / by Allan BoweWhen applying financial regulations in the EU (such as Solvency II, Basel III or GDPR) it is common for Member States to maintain or introduce national provisions to further specify how such rules might be applied. The National Bank of Belgium (NBB) is no stranger to this, and releases a steady stream of circulars via their website. The circular of 12th October 2017 (NBB_2017_27, Jan Smets) is particularly interesting as it lays out a number of concrete recommendations for Belgian financial institutions with regard to Data Quality - and stated that these should be applied to internal reporting processes as well as the prudential data submitted. This fact is well known by affected industry participants, who have already performed a self assessment for YE2017 and reviewed documentation expectations as part of the HY2018 submission.

Quality of External Data

The DQ requirements for reporting are described by the 6 dimensions (Accuracy, Reliability, Completeness, Consistency, Plausibility, Timeliness), as well as the Data Quality Framework described by Patrick Hogan here and here. There are a number of 'hard checks' implemented in OneGate as part of the XBRL submissions, which are kept up to date here. However, OneGate cannot be used as a validation tool - the regulators will be monitoring the reliability of submissions by comparing the magnitude of change between resubmissions! Not to mention the data plausibility (changes in submitted values over time).Data Quality Culture

When it comes to internal processes, CRO's across Belgium must now demonstrate to accredited statutory auditors that they satisfy the 3 Principles of the circular (Governance, Technical Capacities, Process). A long list of action points are detailed - it's clear that a lot of documentation will be required to fulfil these obligations! And not only that - the documentation will need to be continually updated and maintained. It's fair to say that automated solutions have the potential to provide significant time & cost savings in this regard.Data Controller for SAS®

The Data Controller is a web based solution for capturing data from users. Data Quality is applied at source, changes are routed through an approval process before being applied, and all updates are captured for subsequent audit. The tool provides evidence of compliance with NBB_2017_27 in the following ways:Separation of Roles for Data Preparation and Validation (principle 1.2)

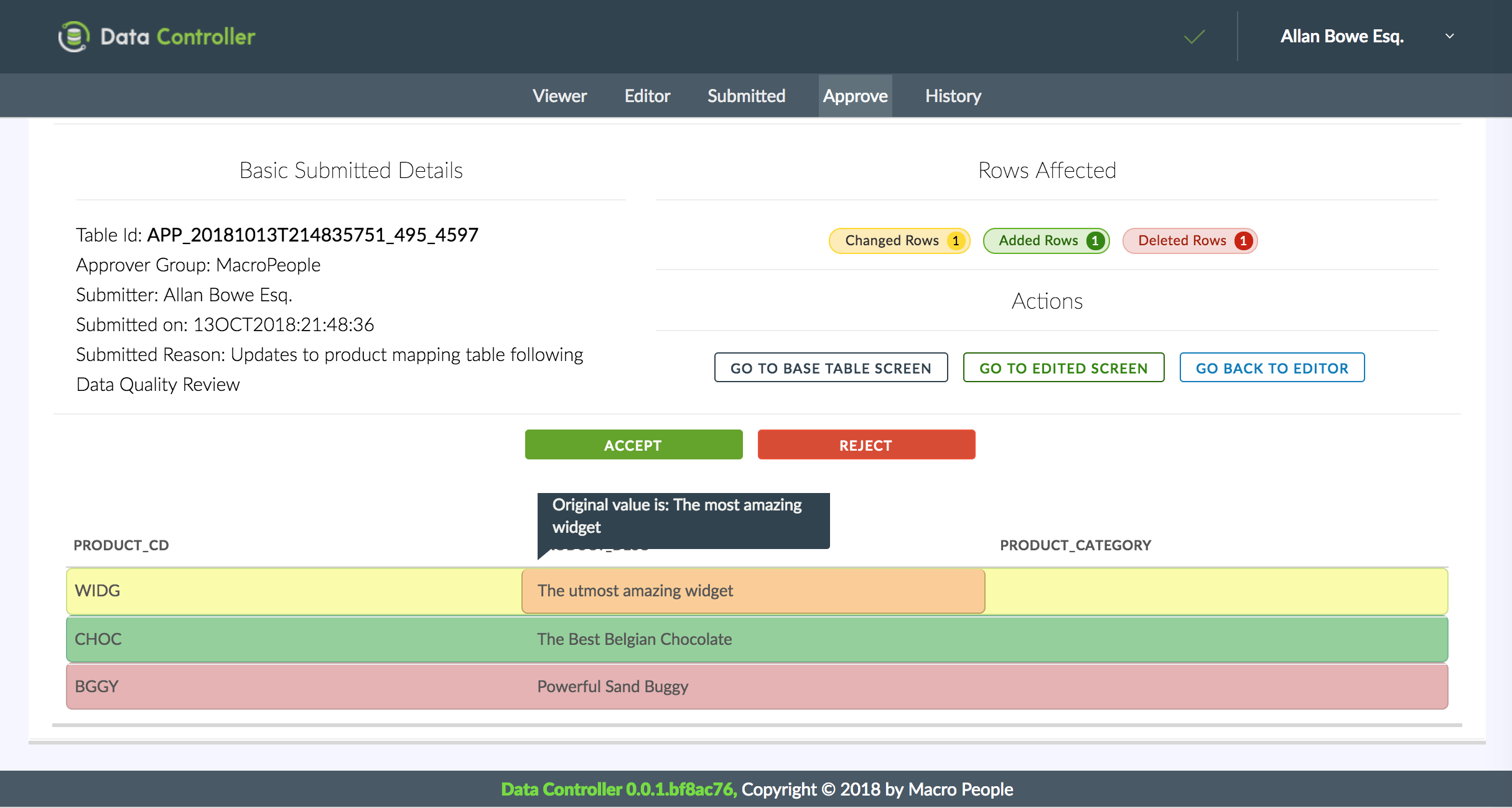

Data Controller differentiates between Editors (who provide the data) and Approvers (who sign it off). Editors stage data via the web interface, or by direct file upload. Approvers are then shown the new, changed, or deleted records - and can accept or reject the update.

Capacities established should ensure compliance in times of stress (principle 2.1)

As an Enterprise tool, the Data Controller is as scalable and resilient as your existing SAS platform.Capture of Errors and Inconsistencies (principle 2.2)

Data Controller has a number of features to ensure timely detection of Data Quality issues at source (such as cell validation, post edit hook scripts, duplicate removals, rejection of data with missing columns, etc etc). Where errors do make it into the system, a full history is kept (logs, copies of files etc) for all uploads and approvals. Emails of such errors can be configured for follow up.Tools and Techniques for Information Management Should be Automated (principle 2.3)

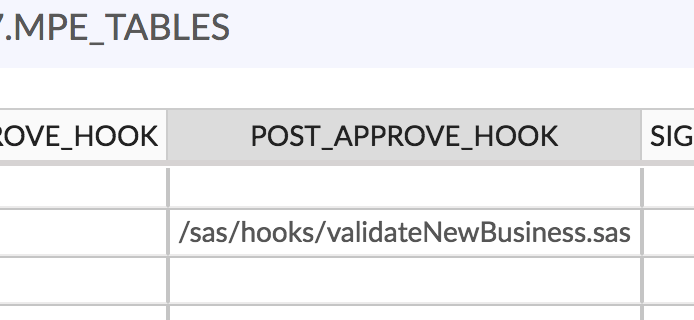

The Data Controller can be configured to execute specific .sas programs after data validation. This enables the development of a secure and integrated workflow, and helps companies to avoid the additional documentation penalties associated with "miscellaneous unconnected computer applications" and manual information processing.

Periodic Review & Improvements (principles 2.4 and 3.4)

The Data Controller is actively maintained with the specific aim to reduce the cost of compliance with regulations such as NBB_2017_27. Our roadmap includes new features such as pre-canned reports, version 'signoff', and the ability to reinstate previous versions of data.A process for correction and final validation of reporting before submission (3.1)

As a primary and dedicated tool for data corrections, Data Controller can be described once and used everywhere.List of Divisions Involved in Preparing Tables (principle 3.2)

By using the Data Controller in combination with knowledge of data lineage (eg from SAS metadata or manual lookup table) it becomes possible to produce an automated report to identify exactly who - and hence which division - was involved in both the preparation and the validation of the all source data per reporting table for each reporting cycle.Processes should integrate and document key controls (principle 3.3)

Data Controller can be used as a staging point for verifying the quality of data, eg when data from one department must be passed to another department for processing. The user access policy will be as per the existing policy for your SAS environment.Summary

Whilst the circular provides valuable clarity on the expectations of the NBB, there are significant costs involved to prepare for, and maintain, compliance with the guidance. This is especially the case where reporting processes are disparate, and make use of disconnected EUCs and manual processes. The Data Controller for SAS® addresses and automates a number of pain points as specifically described in the circular. It is a robust and easy-to-use tool, actively maintained and documented, and provides an integrated solution on a tried and trusted platform for data management.Data Controller

Data Controller is the product of a UK company with a singular focus on SAS Web Apps.

Source Code

All our source code can be found on our self-hosted Gitea Repository.

Other Resources

Leverage our underlying tech stack on Github and build your own SAS Powered Web Apps.